By Robert Slavin and Amanda Neitzel, Johns Hopkins University

In two recent blogs (here and here), I’ve written about Baltimore’s culinary glories: crabs and oysters. My point was just that in both cases, there is a lot you have to discard to get to what matters. But I was of course just setting the stage for a problem that is deadly serious, at least to anyone concerned with evidence-based reform in education.

Meta-analysis has contributed a great deal to educational research and reform, helping readers find out about the broad state of the evidence on practical approaches to instruction and school and classroom organization. Recent methodological developments in meta-analysis and meta-regression, and promotion of the use of these methods by agencies such as IES and NSF, have expanded awareness and use of modern methods.

Yet looking at large numbers of meta-analyses published over the past five years, even up to the present, the quality is highly uneven. That’s putting it nicely. The problem is that most meta-analyses in education are far too unselective with regards to the methodological quality of the studies they include. Actually, I’ve been ranting about this for many years, and along with colleagues, have published several articles on it (e.g., Cheung & Slavin, 2016; Slavin & Madden, 2011; Wolf et al., 2020). But clearly, my colleagues and I are not making enough of a difference.

My colleague, Amanda Neitzel, and I thought of a simple way we could communicate the enormous difference it makes if a meta-analysis accepts studies that contain design elements known to inflate effect sizes. In this blog, we once again use the Kulik & Fletcher (2016) meta-analysis of research on computerized intelligent tutoring, which I critiqued in my blog a few weeks ago (here). As you may recall, the only methodological inclusion standards used by Kulik & Fletcher required that studies use RCTs or QEDs, and that they have a duration of at least 30 minutes (!!!). However, they included enough information to allow us to determine the effect sizes that would have resulted if they had a) weighted for sample size in computing means, which they did not, and b) excluded studies with various features known to inflate effect size estimates. Here is a table summarizing our findings when we additionally excluded studies containing procedures known to inflate mean effect sizes:

If you follow meta-analyses, this table should be shocking. It starts out with 50 studies and a very large effect size, ES=+0.65. Just weighting the mean for study sample sizes reduces this to +0.56. Eliminating small studies (n<60) cut the number of studies almost in half (n=27) and cut the effect size to +0.39. But the largest reductions are due to excluding “local” measures, which on inspection are always measures made by developers or researchers themselves. (The alternative was “standardized measures.”) By itself, excluding local measures (and weighting) cut the number of included studies to 12, and the effect size to +0.10, which was not significantly different from zero (p=.17). Excluding small, brief, and “local” measures only slightly changes the results, because both small and brief studies almost always use “local” (i.e., researcher-made) measures. Excluding all three, and weighting for sample size, leaves this review with only nine studies and an effect size of +0.09, which is not significantly different from zero (p=.21).

The estimates at the bottom of the chart represent what we call “selective standards.” These are the standards we apply in every meta-analysis we write (see www.bestevidence.org), and in Evidence for ESSA (www.evidenceforessa.org).

It is easy to see why this matters. Selective standards almost always produce much lower estimates of effect sizes than do reviews with much less selective standards, which therefore include studies containing design features that have a strong positive bias on effect sizes. Consider how this affects mean effect sizes in meta-analyses. For example, imagine a study that uses two measures of achievement. One is a measure made by the researcher or developer specifically to be “sensitive” to the program’s outcomes. The other is a test independent of the program, such as GRADE/GMADE or Woodcock, standardized tests but not necessarily state tests. Imagine that the researcher-made measure obtains an effect size of +0.30, while the independent measure has an effect size of +0.10. A less-selective meta-analysis would report a mean effect size of +0.20, a respectable-sounding impact. But a selective meta-analysis would report an effect size of +0.10, a very small impact. Which of these estimates represents an outcome with meaning for practice? Clearly, school leaders should not value the +0.30 or +0.20 estimates, which require use of a test designed to be “sensitive” to the treatment. They should care about the gains on the independent test, which represents what educators are trying to achieve and what they are held accountable for. The information from the researcher-made test may be valuable to the researchers, but it has little or no value to educators or students.

The point of this exercise is to illustrate that in meta-analyses, choices of methodological exclusions may entirely determine the outcomes. Had they chosen other exclusions, the Kulik & Fletcher meta-analysis could have reported any effect size from +0.09 (n.s.) to +0.65 (p<.000).

The importance of these exclusions is not merely academic. Think how you’d explain the chart above to your sister the principal:

Principal Sis: I’m thinking of using one of those intelligent tutoring programs to improve achievement in our math classes. What do you suggest?

You: Well, it all depends. I saw a review of this in the top journal in education research. It says that if you include very small studies, very brief studies, and studies in which the researchers made the measures, you could have an effect size of +0.65! That’s like seven additional months of learning!

Principal Sis: I like those numbers! But why would I care about small or brief studies, or measures made by researchers? I have 500 kids, we teach all year, and our kids have to pass tests that we don’t get to make up!

You (sheepishly): I guess you’re right, Sis. Well, if you just look at the studies with large numbers of students, which continued for more than 12 weeks, and which used independent measures, the effect size was only +0.09, and that wasn’t even statistically significant.

Principal Sis: Oh. In that case, what kinds of programs should we use?

From a practical standpoint, study features such as small samples or researcher-made measures add a lot to effect sizes while adding nothing to the value to students or schools of the programs or practices they want to know about. They just add a lot of bias. It’s like trying to convince someone that corn on the cob is a lot more valuable than corn off the cob, because you get so much more quantity (by weight or volume) for the same money with corn on the cob. Most published meta-analyses only require that studies have control groups, and some do not even require that much. Few exclude researcher- or developer-made measures, or very small or brief studies. The result is that effect sizes in published meta-analyses are very often implausibly large.

Meta-analyses that include studies lacking control groups or studies with small samples, brief durations, pretest differences, or researcher-made measures report overall effect sizes that cannot be fairly compared to other meta-analyses that excluded such studies. If outcomes do not depend on the power of the particular program but rather on the number of potentially biasing features they did or did not exclude, then outcomes of meta-analyses are meaningless.

It is important to note that these two examples are not at all atypical. As we have begun to look systematically at published meta-analyses, most of them fail to exclude or control for key methodological factors known to contribute a great deal of bias. Something very serious has to be done to change this. Also, I’d remind readers that there are lots of programs that do meet strict standards and show positive effects based on reality, not on including biasing factors. At www.evidenceforessa.org, you can see more than 120 reading and math programs that meet selective standards for positive impacts. The problem is that in meta-analyses that include studies containing biasing factors, these truly effective programs are swamped by a blizzard of bias.

In my recent blog (here) I proposed a common set of methodological inclusion criteria that I would think most methodologists would agree to. If these (or a similar consensus list) were consistently used, we could make more valid comparisons both within and between meta-analyses. But as long as inclusion criteria remain highly variable from meta-analysis to meta-analysis, then all we can do is pick out the few that do use selective standards, and ignore the rest. What a terrible waste.

References

Cheung, A., & Slavin, R. (2016). How methodological features affect effect sizes in education. Educational Researcher, 45 (5), 283-292.

Kulik, J. A., & Fletcher, J. D. (2016). Effectiveness of intelligent tutoring systems: a meta-analytic review. Review of Educational Research, 86(1), 42-78.

Slavin, R. E., Madden, N. A. (2011). Measures inherent to treatments in program effectiveness reviews. Journal of Research on Educational Effectiveness, 4, 370–380.

Wolf, R., Morrison, J.M., Inns, A., Slavin, R. E., & Risman, K. (2020). Average effect sizes in developer-commissioned and independent evaluations. Journal of Research on Educational Effectiveness. DOI: 10.1080/19345747.2020.1726537

Photo credit: Deeper Learning 4 All, (CC BY-NC 4.0)

This blog was developed with support from Arnold Ventures. The views expressed here do not necessarily reflect those of Arnold Ventures.

Note: If you would like to subscribe to Robert Slavin’s weekly blogs, just send your email address to thebee@bestevidence.org.

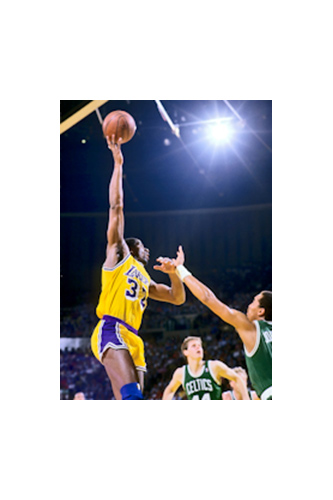

In educational research evaluating replicable programs and practices, our objectives are quite different. Sports reporting builds up heroes, because that’s what readers want to hear about. But in educational research, we want fair, complete, and meaningful evidence documenting the effectiveness of practical means of improving achievement or other outcomes. The problem is that academic publications in education also distort understanding of outcomes of educational interventions, because studies with significant positive effects (analogous to Magic’s best days) are far more likely to be published than are studies with non-significant differences (like Magic’s worst days). Unlike the situation in sports, these distortions are harmful, usually overstating the impact of programs and practices. Then when educators implement interventions and fail to get the results reported in the journals, this undermines faith in the entire research process.

In educational research evaluating replicable programs and practices, our objectives are quite different. Sports reporting builds up heroes, because that’s what readers want to hear about. But in educational research, we want fair, complete, and meaningful evidence documenting the effectiveness of practical means of improving achievement or other outcomes. The problem is that academic publications in education also distort understanding of outcomes of educational interventions, because studies with significant positive effects (analogous to Magic’s best days) are far more likely to be published than are studies with non-significant differences (like Magic’s worst days). Unlike the situation in sports, these distortions are harmful, usually overstating the impact of programs and practices. Then when educators implement interventions and fail to get the results reported in the journals, this undermines faith in the entire research process.