“Let me tell you, my dear Watson, about one of my most curious and vexing cases,” said Holmes. “I call it, ‘The Case of the Missing Programs’. A school superintendent from America sent me a letter. It appears that whenever she looks in the What Works Clearinghouse to find a program her district wants to use, nine times out of ten there is nothing there!”

Watson was astonished. “But surely there has to be something. Perhaps the missing programs did not meet WWC standards, or did not have positive effects!”

“Not meeting standards or having disappointing outcomes would be something,” responded Holmes, “but the WWC often says nothing at all about a program. Users are apparently confused. They don’t know what to conclude.”

“The missing programs must make the whole WWC less useful and reliable,” mused Watson.

“Just so, my friend,” said Holmes, “and so we must take a trip to America to get to the bottom of this!”

While Holmes and Watson are arranging steamship transportation to America, let me fill you in on this very curious case.

In the course of our work on Evidence for ESSA (www.evidenceforessa.org), we are occasionally asked by school district leaders why there is nothing in our website about a given program, text, or software. Whenever this happens, our staff immediately checks to see if there is any evidence we’ve missed. If we are pretty sure that there are no studies of the missing program that meet our standards, we add the program to our website, with a brief indication that there are no qualifying studies. If any studies do meet our standards, we review them as soon as possible and add them as meeting or not meeting ESSA standards.

Sometimes, districts or states send us their entire list of approved texts and software, and we check them all to see that all are included.

From having done this for more than a year, we now have an entry on most of the reading and math programs any district would come up with, though we keep getting more all the time.

All of this seems to us to be obviously essential. If users of Evidence for ESSA look up their favorite programs, or ones they are thinking of adopting, and find that there is no entry, they begin losing confidence in the whole enterprise. They cannot know whether the program they seek was ignored or missed for some reason, or has no evidence of effectiveness, or perhaps has been proven effective but has not been reviewed.

Recently, a large district sent me their list of 98 approved and supplementary texts, software, and other programs in reading and math. They had marked each according to the ratings given by the What Works Clearinghouse and Evidence for ESSA. At the time (a few weeks ago), Evidence for ESSA had listings for 67% of the programs. Today, of course, it has 100%, because we immediately set to work researching and adding in all the programs we’d missed.

What I found astonishing, however, is how few of the district’s programs were mentioned at all in the What Works Clearinghouse. Only 15% of the reading and math programs were in the WWC.

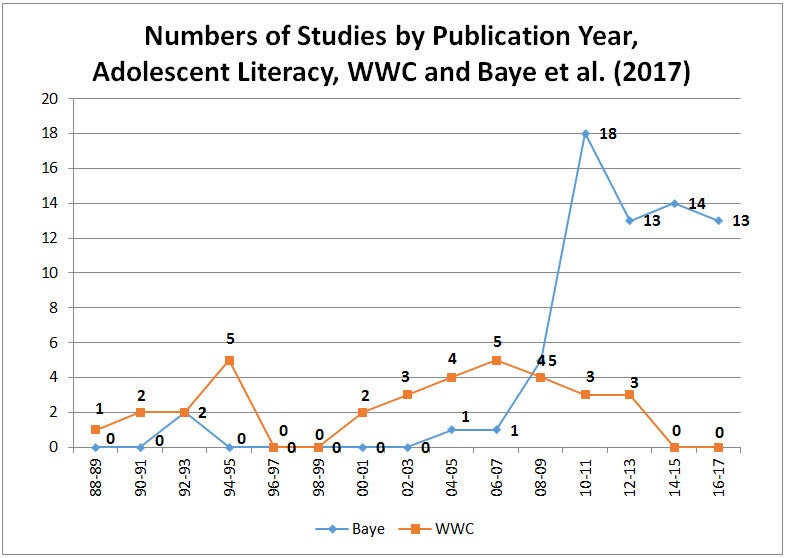

I’ve written previously about how far behind the WWC is in reviewing programs. But the problem with the district list was not just a question of slowness. Many of the programs the WWC missed have been around for some time.

I’m not sure how the WWC decides what to review, but they do not seem to be trying for completeness. I think this is counterproductive. Users of the WWC should expect to be able to find out about programs that meet standards for positive outcomes, those that have an evidence base that meets evidence standards but do not have positive outcomes, those that have evidence not meeting standards, and those that have no evidence at all. Yet it seems clear that the largest category in the WWC is “none of the above.” Most programs a user would be interested in do not appear at all in the WWC. Most often, a lack of a listing means a lack of evidence, but this is not always the case, especially when evidence is recent. One way or another, finding big gaps in any compendium undermines faith in the whole effort. It’s difficult to expect educational leaders to get into the habit of looking for evidence if most of the programs they consider are not listed.

Imagine, for example, that a telephone book was missing a significant fraction of the people who live in a given city. Users would be frustrated about not being able to find their friends, and the gaps would soon undermine confidence in the whole phone book.

****

When Holmes and Watson arrived in the U.S., they spoke with many educators who’d tried to find programs in the WWC, and they heard tales of frustration and impatience. Many former users said they no longer bothered to consult the WWC and had lost faith in evidence in their field. Fortunately, Holmes and Watson got a meeting with U.S. Department of Education officials, who immediately understood the problem and set to work to find the evidence base (or lack of evidence) for every reading and math program in America. Usage of the WWC soared, and support for evidence-based reform in education increased.

Of course, this outcome is fictional. But it need not remain fictional. The problem is real, and the solution is simple. Or as Holmes would say, “Elementary and secondary, my dear Watson!”

Photo credit: By Rumensz [CC0], from Wikimedia Commons

This blog was developed with support from the Laura and John Arnold Foundation. The views expressed here do not necessarily reflect those of the Foundation.